My research focuses on building controllable, identity-preserving generative models for image editing, composition, and restoration. I am particularly interested in problems where generation operates on real images, requiring outputs to remain consistent, recognizable, and faithful under diverse, real-world conditions.

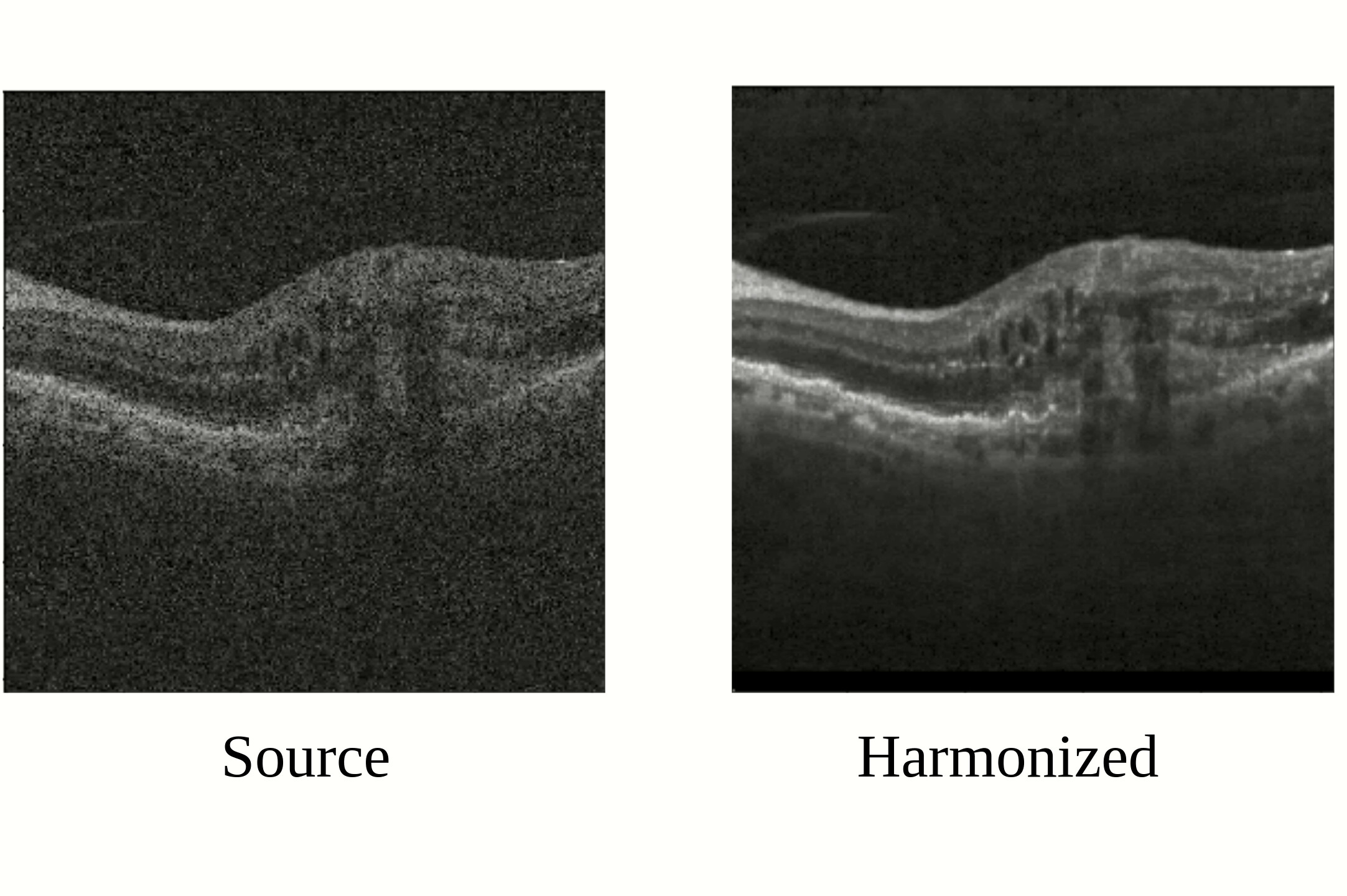

My work spans early research exploration to tech transfer into real products, including large-scale data curation, pipeline design, and deployment in production settings. At Adobe, I led the development of generative harmonization technology from prototype to production, shipped as Harmonize in Photoshop, enabling photorealistic compositing at scale.

Currently, my research explores personalized generative models for photography, preserving identity across editing and generation.

See full publication list at Google Scholar.

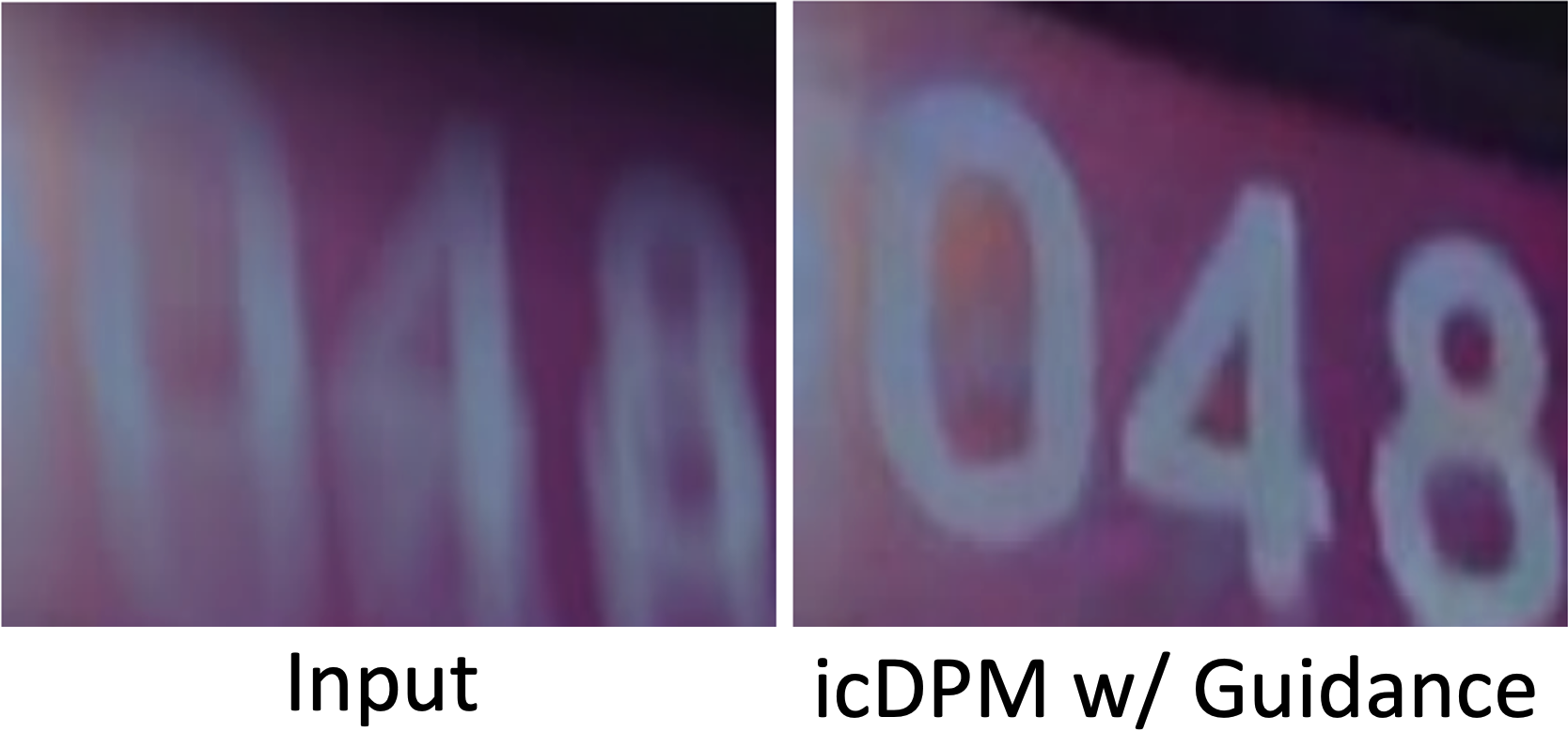

Generative Portrait Shadow Removal

A high-fidelity portrait shadow removal model that effectively enhances portraits by predicting appearance under disturbing shadows and highlights.

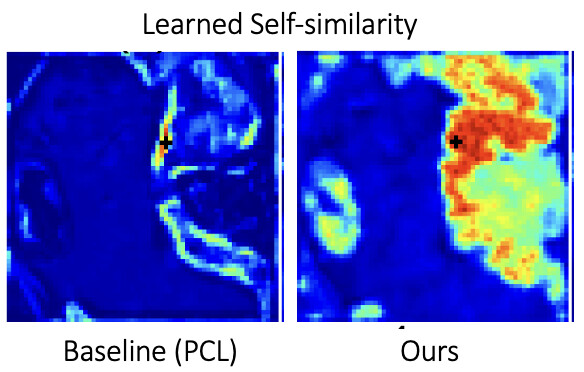

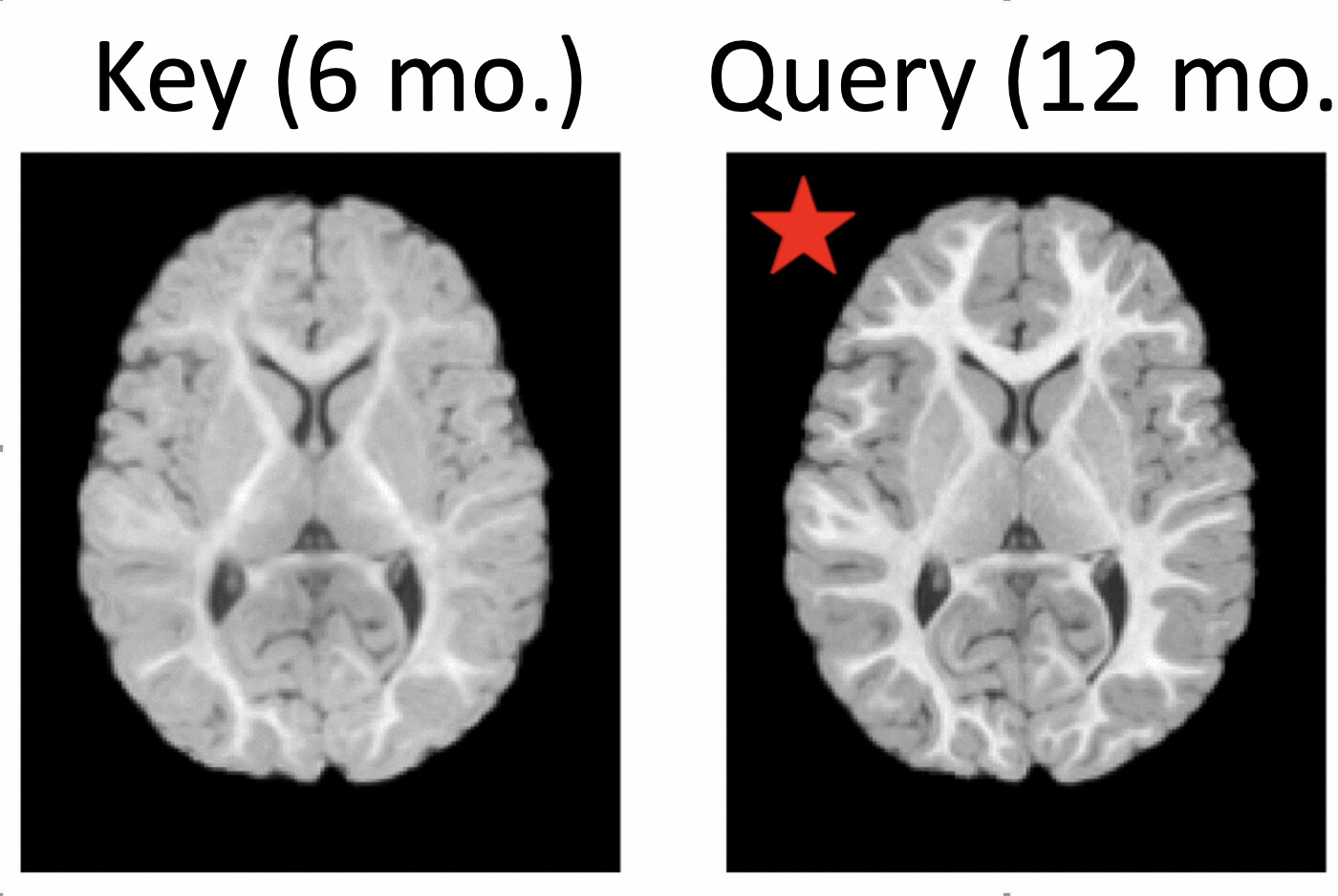

Keypoint-Augmented Self-Supervised Learning for Medical Image Segmentation with Limited Annotation

A keypoint-augmented fusion layer that extracts representations preserving both short- and long-range self-attention in a self-supervised manner.

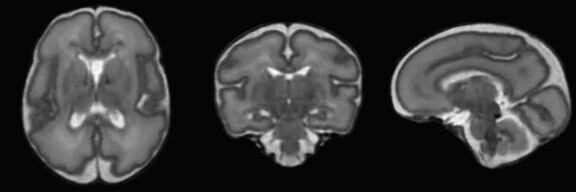

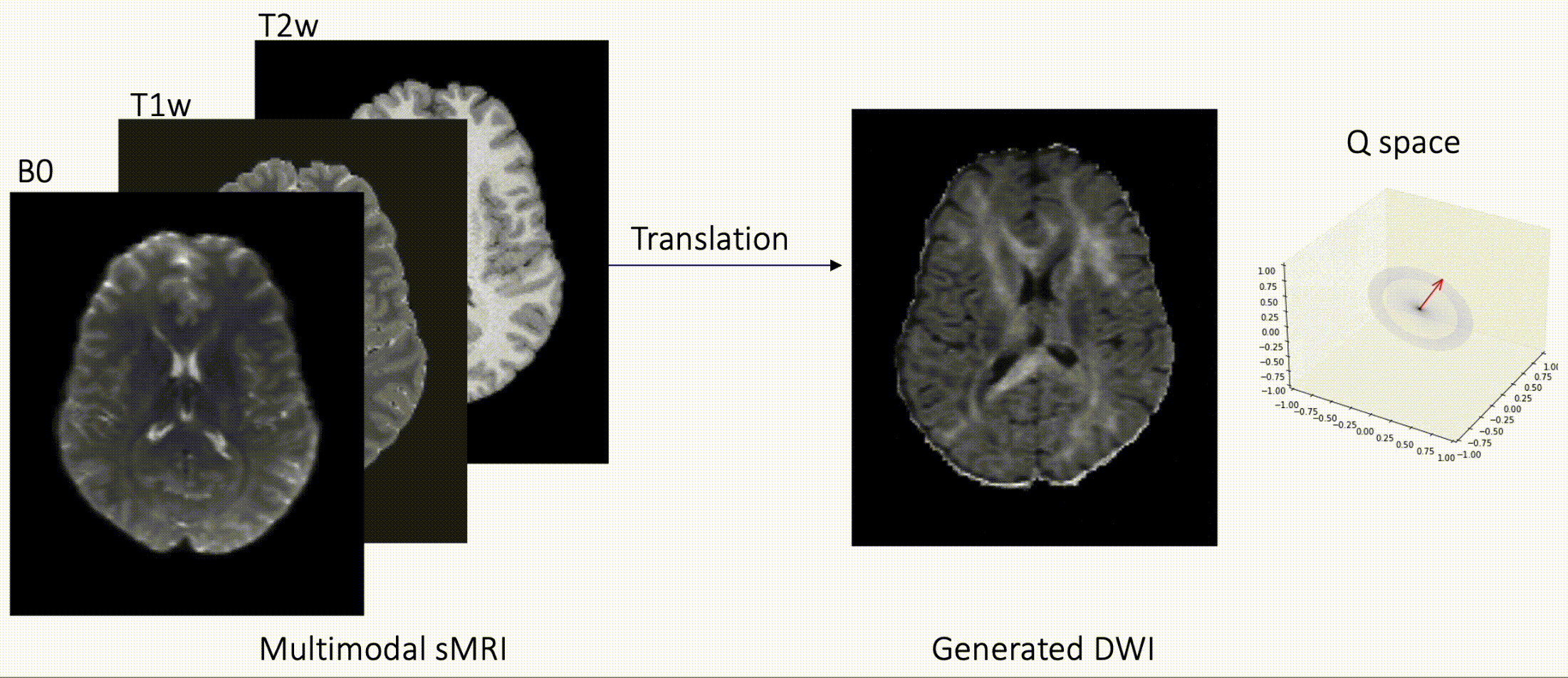

Q-space Conditioned Translation Networks for Directional Synthesis of Diffusion Weighted Images from Multi-modal Structural MRI

A generative adversarial translation framework for high-quality DW image estimation with arbitrary Q-space sampling given commonly acquired structural images.